Continental crop type mapping with openEO

When producing continental maps to monitor e.g. agricultural production, you need massive amounts of earth observation data, and you also need a professional and suitable environment to easily process the data and retrieve the information you need.

In this case we explain how you can use openEO to produce continental crop type maps. Discover more about the possibilities, setup and challenges involved including the collection of training data, computing power, DevOps techniques, parallel processing, FAIR principles, data catalogs, reproducibility.

The crop type mapping workflow

Terrascope is a member of the openEO platform, which makes it easier and more affordable to process and analyse large amounts of Earth observation data. By using standardized interfaces you can access and process data from various sources and also run the same algorithm on different cloud platforms, independent of any specific technology.

This crop type mapping workflow, developed by VITO Remote Sensing, is one example that we implemented to demonstrate this standards-based platform. It uses various types of data inputs and a machine learning model. The workflow is implemented in two parts, referred to as preprocessing and inference.

Preprocessing and inference

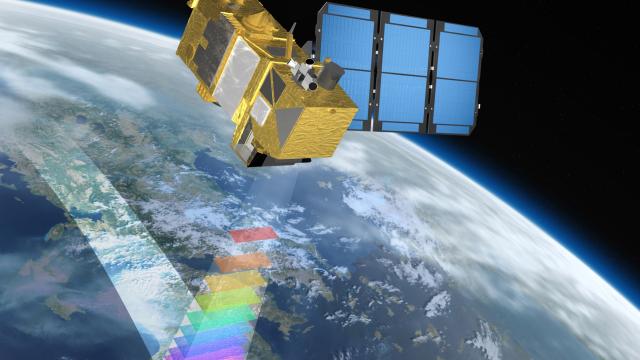

In the preprocessing part, data is collected over an 8-month period from various sources, including WorldCover data, Sentinel-2 L2A data, Sentinel-1 GRD data, and Copernicus 30m DEM data.

- The WorldCover data is used to create a binary mask, retaining only pixels that represent agriculture, to ensure that only relevant pixels are processed further.

- The Sentinel-2 L2A (atmospherically corrected) data is cloud-masked and converted into 10-daily median composites, with remaining gaps filled using linear interpolation.

- The Sentinel-1 GRD data is converted into sigma0 backscatter, and 10-daily median composites are derived.

- The Copernicus 30m DEM data is also added, and all this data is aligned to the same grid and combined into a single datacube.

In the second inference part, the preprocessed datacube is input into a machine learning model. The model takes the evolution of a pixel over time as input and does not take spatial context into account, as having multiple bands and the temporal profile was sufficient in this case. The model then produces an output that maps the crop type for each pixel in the datacube.

Using openEO to run workflow and machine learning models

To run workflows on openEO, the algorithms must be translated into technology-agnostic openEO workflows, called "process graphs". In this case, the workflow was developed in Python. Although the resulting code looks like any other algorithm, openEO reduces the amount of Python code by handling all of the complexity (e.g. finding data, efficient reading, handling overlap, backscatter computation, compositing) on the backend side.

This is a huge benefit for data scientists who need to maintain the code and reviewers who need to understand the code to analyze the methodology or reproduce results, a strong advantage in terms of reproducibility and open science.

OpenEO was also used to run a machine learning model based on PyTorch. We used the openEO "user-defined functions" to run arbitrary Python code that transforms XArray data structures as part of the openEO workflow. While openEO contains 100+ predefined functions, we still need user-defined functions to implement cases like deep learning that have not yet been standardized. Allowing user-defined code greatly expands the number of use cases that can be translated into openEO and is also convenient for cases where a full translation into openEO predefined functions would be expensive. Overall, this demonstrates the flexibility and adaptability of openEO in supporting a wide range of use cases for working with large-scale earth observation data.

Large scale processing

The openEO platform makes it possible to run the large scale processing on multiple infrastructures, including Terrascope. This gives a reasonable amount of bandwidth and ensures the continuation of the processing even if one backend experiences issues. This concept is referred to as ‘federated processing’, and is also a key element in Destination Earth, a part of the European Union digital strategy. More details on how we setup and performed the actual processing can be found in this document.

Available in the openEO editor

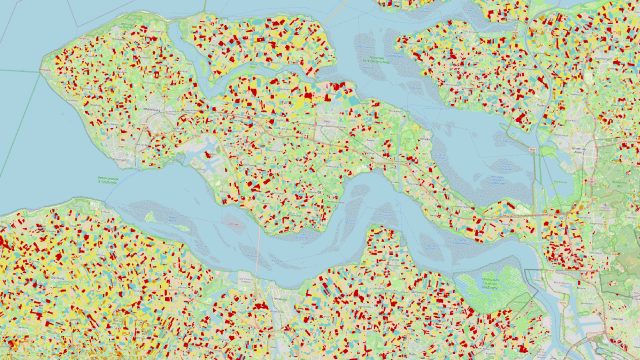

The continental crop type map produced via openEO consists of over 11 000 cloud optimized Geotiff files, which are accompanied by STAC metadata that contains links to input products for provenance.

However, managing and inspecting such a large volume of data is challenging, so the openEO platform team is exploring ways to allow automatic publishing into a STAC catalog and setting up viewing services for inspecting the results at a large scale.

The final map is available as an experimental collection in openEO platform and can be viewed in the openEO editor. This continental crop type map was created for demonstration purposes only so please keep in mind there are still some issues that need to be resolved before it can be used in operational services. The goal is to replace the map with a more accurate version by the end of 2023.

11,000

openEO produced more than 11,000 tiles of 20 by 20 km

6

The crop type map shows 6 classified crop categories

91,000

Over 91k processing (cpu) hours were spent on the platform for the production and testing of this worflow

Products and services

openEO

The openEO platform allows you to access and process data from various sources and also run the same algorithm on different cloud platforms.

WorldCover

The WorldCover data is used to create a binary mask, retaining only pixels that represent agriculture, to ensure that only relevant pixels are processed further.

Sentinel data

Sentinel-2 L2A data and Sentinel-1 GRD data were also used in the preprocessing part.